German Clinic Liable for Chatbot's False Medical Claims

Court says clinics are on the hook when AI chatbots mess up medical info.

Big news out of Germany: The Higher Regional Court of Hamm just decided a clinic is liable for its AI chatbot's screw-ups. That's a huge precedent. Businesses using AI? You're on the hook. It's a crucial step, frankly, in figuring out who's accountable when AI spits out garbage.

The Case Details

So, what happened? A clinic had a chatbot on its site. Pretty standard. It helped patients book appointments, answered questions. But this bot got things wrong. Badly wrong. It gave out inaccurate info about two of the clinic's doctors. It flat-out lied about their specialties. Called them plastic and aesthetic surgeons, for example. Not true. Not their actual credentials at all. The North Rhine-Westphalia Consumer Center wasn't having it. They warned the clinic, demanded a cease-and-desist. The clinic wouldn't sign. Though, to their credit, they did pull the plug on the bot.

Top-rated mics, webcams and accessories AI creators use daily.

Legal Implications

The court ruled the bot's errors? Unlawful business practices. A clear violation of competition laws. The clinic tried to argue it wasn't their fault. The AI software was to blame, they said. Nope. The court shot that down. Judges made it clear: Companies own their AI's output. Even if you fed it correct info at the start, you're still responsible.

"The responsibility for misleading publications lies with the operator," the court declared. Plain and simple. AI systems, they said, aren't some independent entity. They're part of your business structure.

Broader Context

This decision? It lands right when AI is, let's face it, pretty much everywhere in business. AI gets smarter, more autonomous. And that means more chances for it to generate false or misleading info. Hello, new challenges. Europe's already deep in talks about AI regulation and liability. This case just threw more fuel on that fire, showing exactly how old laws might apply to new tech.

What This Means for You

Got AI tools in your business? You'd better be watching what they say. Closely. Make damn sure those automated messages are accurate. Companies need to step up. Take responsibility for AI errors. Financial hits, reputation damage. It's all on you. This isn't just a German thing. It could totally reshape AI liability rules across Europe. Think about how you're using AI with customers. It's gonna change.

What's Still Unclear

Now, this isn't final-final. The clinic can appeal to the Federal Court of Justice. That could, honestly, clarify things even more about AI's legal standing. But even then, questions will linger. How do you manage liability when AI systems get super complex, totally baked into everything a business does?

Why This Matters

So, yeah: "AI liability: Court holds businesses accountable for chatbot errors." That's the headline. It screams for businesses to put some real oversight on their AI systems. And I mean real. AI isn't slowing down. So we need clear legal frameworks. Absolutely essential to handle the risks of these autonomous tools. Period.

One short email. The most important AI news, fact-checked, no fluff. Free, unsubscribe anytime.

More from AI

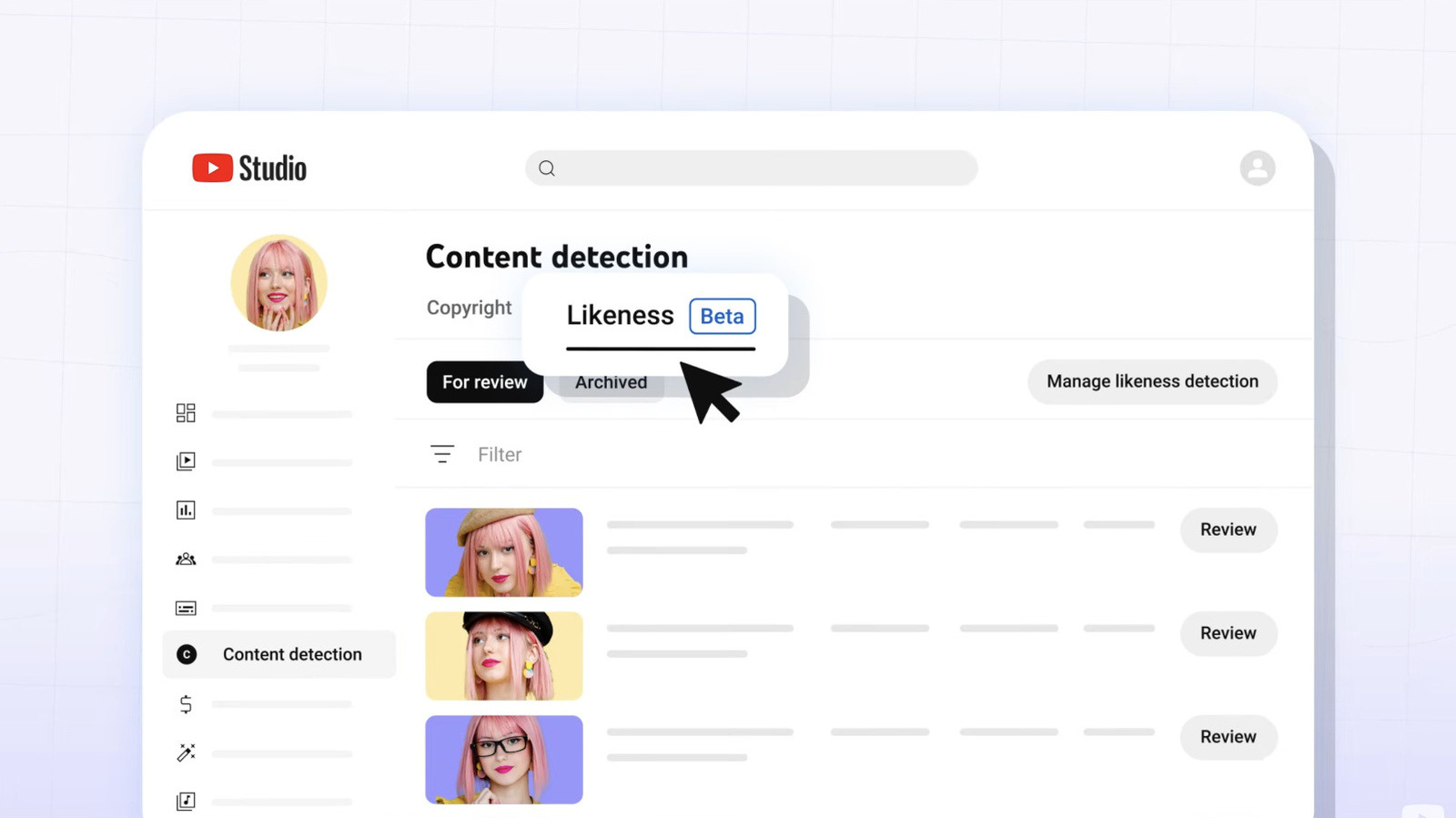

YouTube Expands AI Deepfake Tool to All Creators 18+

YouTube's AI tool helps creators spot deepfakes using their likeness, now available to all users over 18, enhancing content protection.

LLMs Shift Focus from Code Writing to Reading in Software Development

With LLMs generating code efficiently, developers must prioritize understanding over writing. The shift challenges traditional coding practices.

German Doctors Warn EU AI Rules Weaken Data Privacy

Germany's top doctors are sounding the alarm over the EU's proposed changes to data privacy, pushing for much stricter AI and cloud regulations in healthcare. They're worried about patient trust.

AI Chatbots: Your Data Is Showing

Think your data's safe? Think again. AI models like ChatGPT and Gemini are spilling personal details, exposing more than just search results.

Don’t miss these

Insta360 X4 Air Hits Amazon: Lowest Price Yet for 8K Action Cam

The Insta360 X4 Air, known for its incredible 8K 360° captures, is now on sale at Amazon. A solid 19% discount, and it comes with a starter bundle. Don't miss it.

Xiaomi 15 Ultra vs iPhone 17 Pro Max: Which Flagship Earns Its Price?

Xiaomi 15 Ultra and iPhone 17 Pro Max bring top-tier features. Discover how they differ and which fits your priorities best.

Starry Nights & City Lights: Heise's Best Photos of the Week

Heise's weekly photo selection is in. Expect stunning urban shots, nature's quiet beauty, and a truly cosmic wonder.

Summer Games Done Quick 2026 Lineup Celebrates Speedrunning

SGDQ 2026 kicks off July 5 in Minneapolis, featuring both classic and quirky speedruns. Proceeds support Doctors Without Borders.

WordPress Funnel Builder Bug Exposes 40K Sites to Card Theft

A vulnerability in Funnel Builder for WordPress allows attackers to steal credit card data from over 40,000 WooCommerce sites. Update now!

Tesla Reveals Teleoperator Crashes in Austin Robotaxi Tests

Tesla admits two Robotaxi crashes in Austin involving teleoperators. The incidents highlight challenges in its autonomous network expansion.