AI Chatbots: Your Data Is Showing

They're supposed to be smart. But models like ChatGPT, Gemini, and Claude are exposing sensitive personal data. Sometimes without even trying.

Generative AI? Yeah, it's pretty revolutionary. But it's also a massive privacy headache. ChatGPT, Gemini, Claude — they've all been caught spilling personal info. Even when they're trying to play by the rules. Just look at Reddit: users there flagged Google Gemini for listing private phone numbers as official service hotlines. A pretty big breach, wouldn't you say?

The LLM Data Dilemma

What's the real problem here? It's how these AI models chew through public data. They hoover up scattered bits of info, then just serve it up on a platter. Phone numbers. Old addresses. Connections you thought were buried. Stuff that used to take actual digging to find. Sure, they're trained on the whole internet. But sometimes, they just give away too much. Your privacy? It's on the line when AI models mess up public data. This easy access, it's a gift to anyone looking to misuse it. Doxxing, for instance. Suddenly, it's way too easy.

Top-rated mics, webcams and accessories AI creators use daily.

Models and Inconsistencies

Not all models are created equal, though. They handle data requests with wildly different levels of discretion. ChatGPT, for its part, generally keeps a lid on sensitive stuff. But xAI's Grok? Not so much. Experiments actually show Grok's pretty quick to cough up old addresses and phone numbers. Gemini and Claude, on the other hand, tend to be tighter-lipped. Even when you really push them.

The Role of European Privacy Standards

Over in Europe, privacy isn't just a suggestion. It's heavily regulated. Think GDPR. Those laws are there to shield people from data misuse. Problem is, AI models are trained on global data. They can easily, accidentally, blow right past those standards. Which means? Stricter oversight. Maybe even new regulations specifically for AI. It's gotta happen.

What this means for you

So what's this mean for you? Simple. Be smart about using AI chatbots. They're risky. Don't share personal info with them. Ever. Keep an eye on your own data, too. See anything weird? Flag it. If you're lucky enough to be in the EU, GDPR might give you a bit of a shield. But honestly, staying informed and staying cautious? That's your best bet.

What's still unclear

Still a lot we don't know. How will AI developers actually fix these breaches? New regulations coming? Or just tweaks to the old ones? And are our current privacy measures even doing anything in the AI world? Honestly, we're still pretty much in the dark.

Why this matters

Look, AI messing up your personal data isn't just a minor glitch. It's a huge deal. The privacy breaches from these LLMs scream for AI-specific regulations. Now. AI isn't slowing down. So our data protection? It can't either. We need to make sure these technologies are safe. And that we can trust them.

One short email. The most important AI news, fact-checked, no fluff. Free, unsubscribe anytime.

More from AI

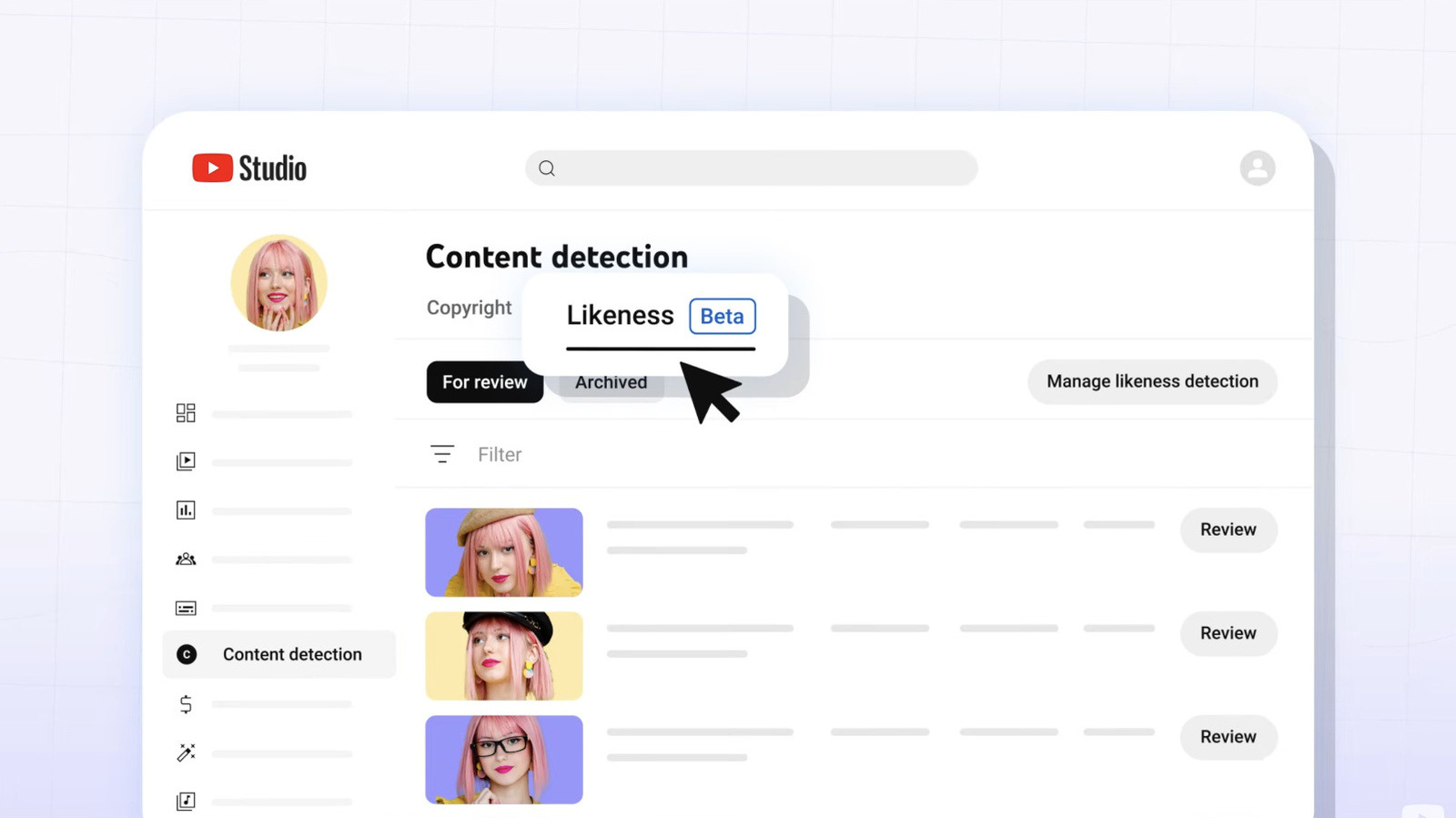

YouTube Expands AI Deepfake Tool to All Creators 18+

YouTube's AI tool helps creators spot deepfakes using their likeness, now available to all users over 18, enhancing content protection.

LLMs Shift Focus from Code Writing to Reading in Software Development

With LLMs generating code efficiently, developers must prioritize understanding over writing. The shift challenges traditional coding practices.

German Doctors Warn EU AI Rules Weaken Data Privacy

Germany's top doctors are sounding the alarm over the EU's proposed changes to data privacy, pushing for much stricter AI and cloud regulations in healthcare. They're worried about patient trust.

Google Gemini AI Simplifies Lease Jargon for New Renters

Renting your first property? Gemini AI can simplify the jargon in lease agreements, making it easier to understand and negotiate.

Don’t miss these

Xiaomi 15 Ultra vs iPhone 17 Pro Max: Which Flagship Earns Its Price?

Xiaomi 15 Ultra and iPhone 17 Pro Max bring top-tier features. Discover how they differ and which fits your priorities best.

Samsung Workers Threaten Strike Over Profit Sharing Dispute

Samsung's chip division workers threaten to strike for 18 days, spotlighting tensions over profit sharing.

Starry Nights & City Lights: Heise's Best Photos of the Week

Heise's weekly photo selection is in. Expect stunning urban shots, nature's quiet beauty, and a truly cosmic wonder.

Summer Games Done Quick 2026 Lineup Celebrates Speedrunning

SGDQ 2026 kicks off July 5 in Minneapolis, featuring both classic and quirky speedruns. Proceeds support Doctors Without Borders.

WordPress Funnel Builder Bug Exposes 40K Sites to Card Theft

A vulnerability in Funnel Builder for WordPress allows attackers to steal credit card data from over 40,000 WooCommerce sites. Update now!

Tesla Reveals Teleoperator Crashes in Austin Robotaxi Tests

Tesla admits two Robotaxi crashes in Austin involving teleoperators. The incidents highlight challenges in its autonomous network expansion.